How to use vLLM as the LLM backend for Ozeki Voice Keyboard on Ubuntu

This guide demonstrates how to configure a vLLM server on Ubuntu as the LLM backend for Ozeki Voice Keyboard on Windows. By running vLLM on a dedicated Ubuntu machine, the AI assistant feature can leverage GPU-accelerated inference over the network: your speech is transcribed by the configured voice model, the resulting text is forwarded to vLLM as a prompt, and the generated response is automatically inserted into the active input field on your Windows machine.

How it works

The diagram below illustrates the full pipeline of the AI assistant feature across the two machines.

Steps to follow

Before proceeding, make sure Anaconda is installed on your

Ubuntu machine. A CUDA-compatible NVIDIA GPU is required for GPU-accelerated inference.

The vllm package will be installed via pip

during the setup process.

- Set up the Conda environment

- Install vLLM

- Start the vLLM server

- Connect vLLM to Ozeki Voice Keyboard

Quick reference commands

# Conda environment conda create -n vllm python=3.12 conda activate vllm # Install vLLM pip install vllm # Start the vLLM server vllm serve Qwen/Qwen3.5-9B \ --enforce-eager \ --max-num-seqs 1 \ --max-model-len 8192 \ --gpu-memory-utilization 0.95

How to set up vLLM on Ubuntu video

The following video shows how to set up and run the vLLM server on Ubuntu step-by-step. The video covers creating the Conda environment, installing vLLM, and starting the server.

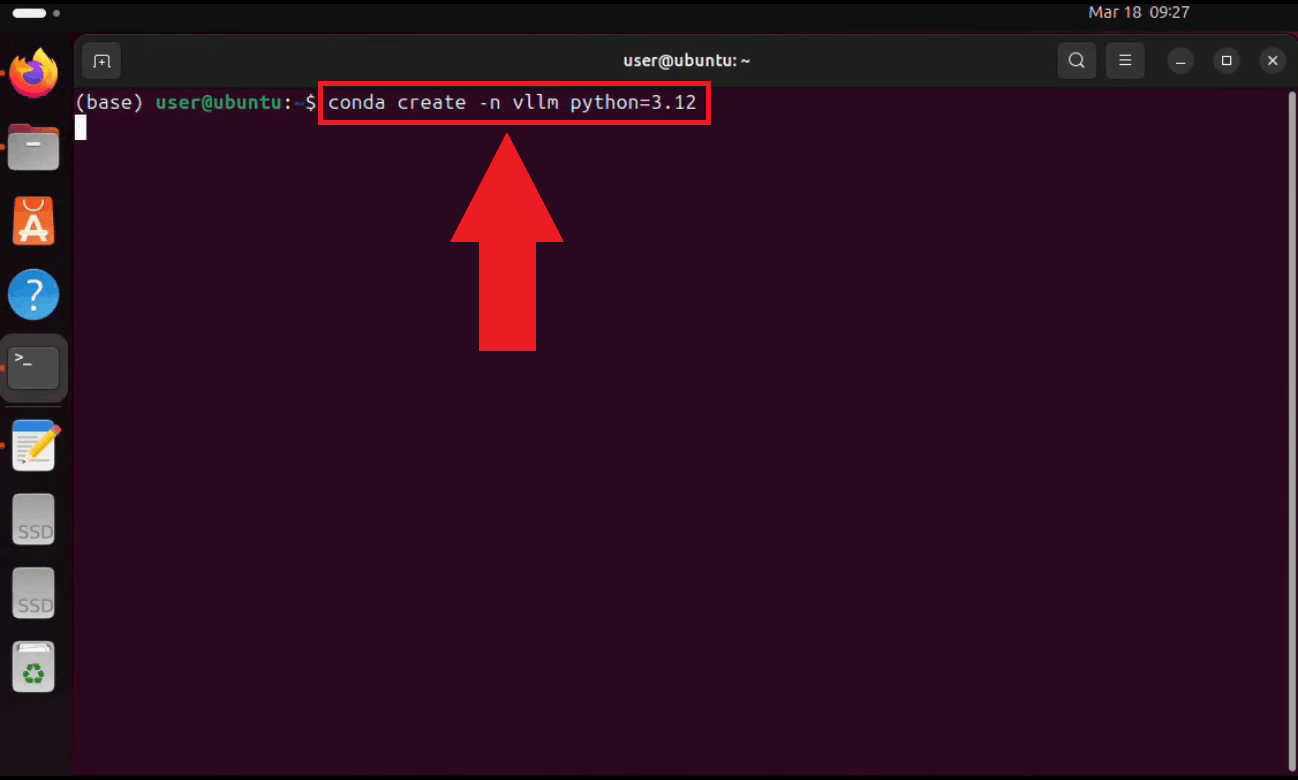

Step 1 - Set up the Conda environment

Open a terminal on your Ubuntu machine and create a dedicated Conda environment with Python 3.12. Using a separate environment keeps vLLM's dependencies isolated from other projects on your system (Figure 1).

conda create -n vllm python=3.12

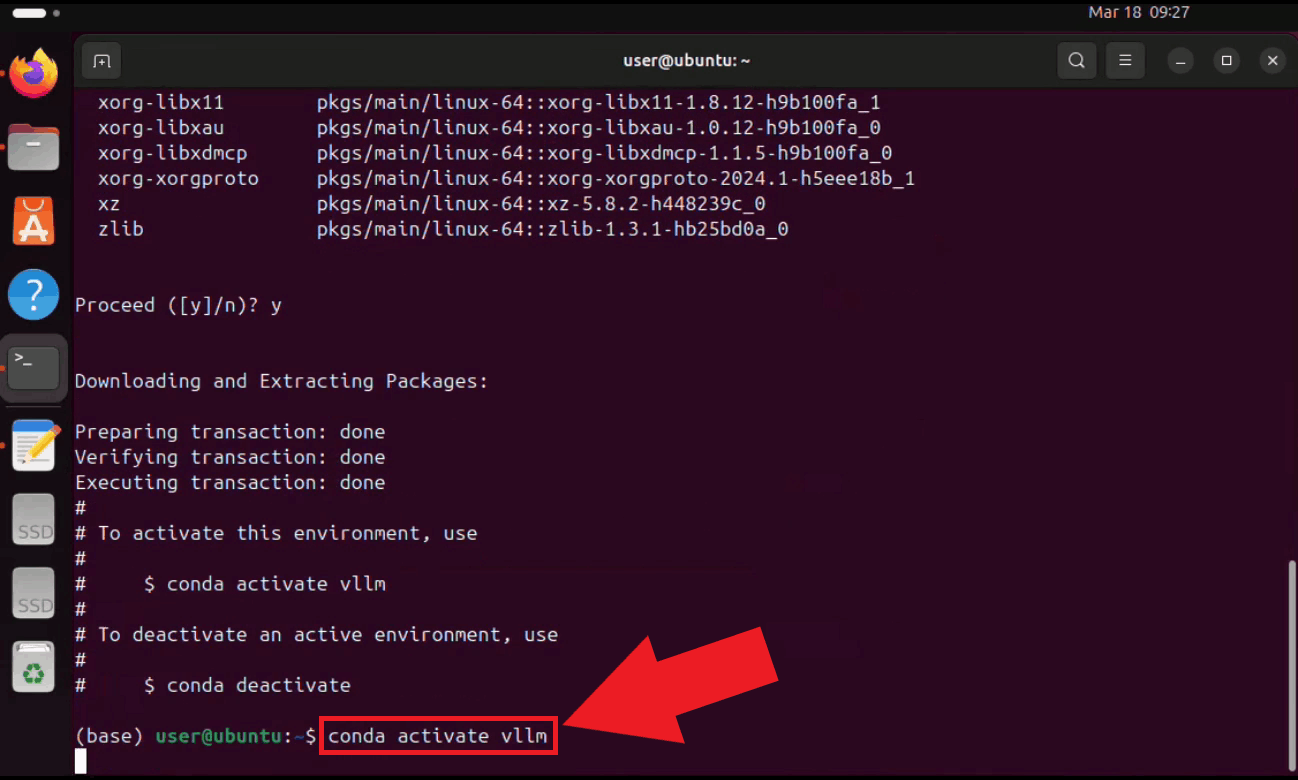

Activate the environment. Your terminal prompt will update to reflect the active environment name (Figure 2).

conda activate vllm

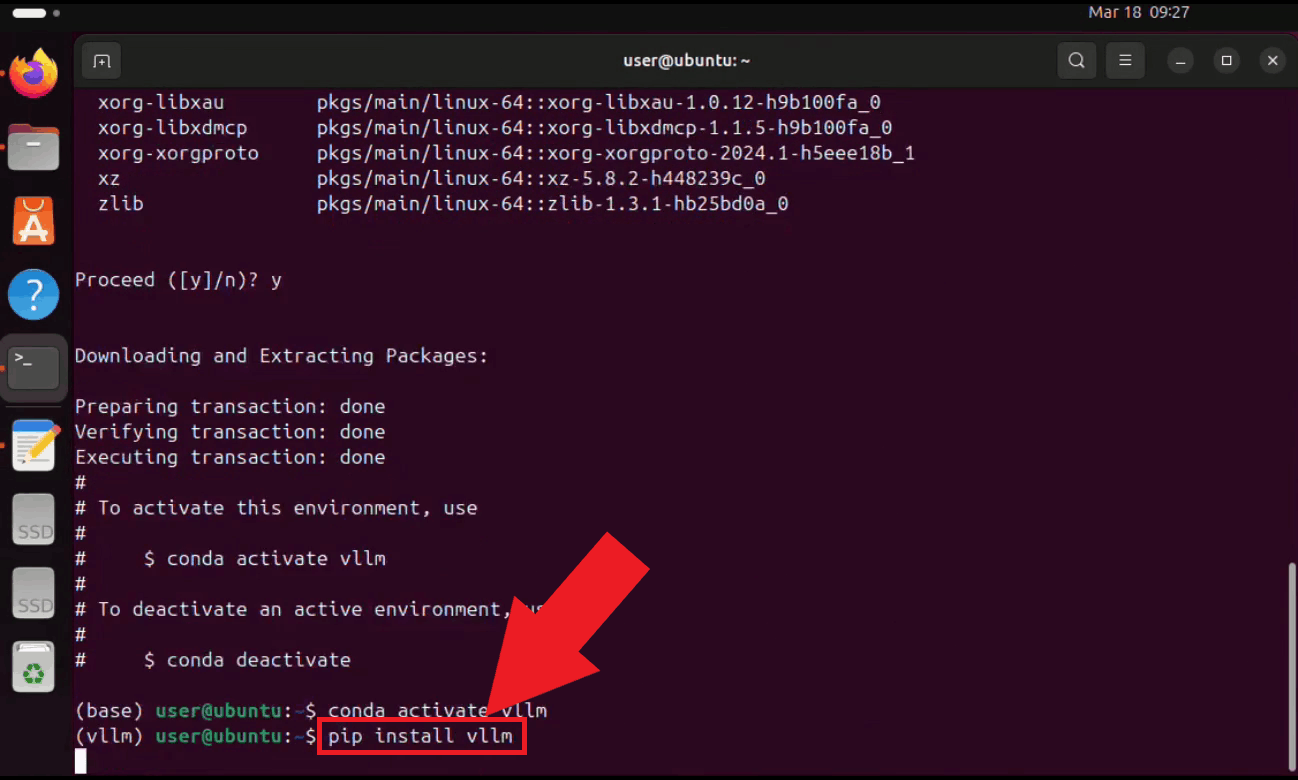

Step 2 - Install vLLM

With the environment active, install vLLM using pip. vLLM will automatically download all required dependencies for serving large language models with GPU acceleration (Figure 3).

pip install vllm

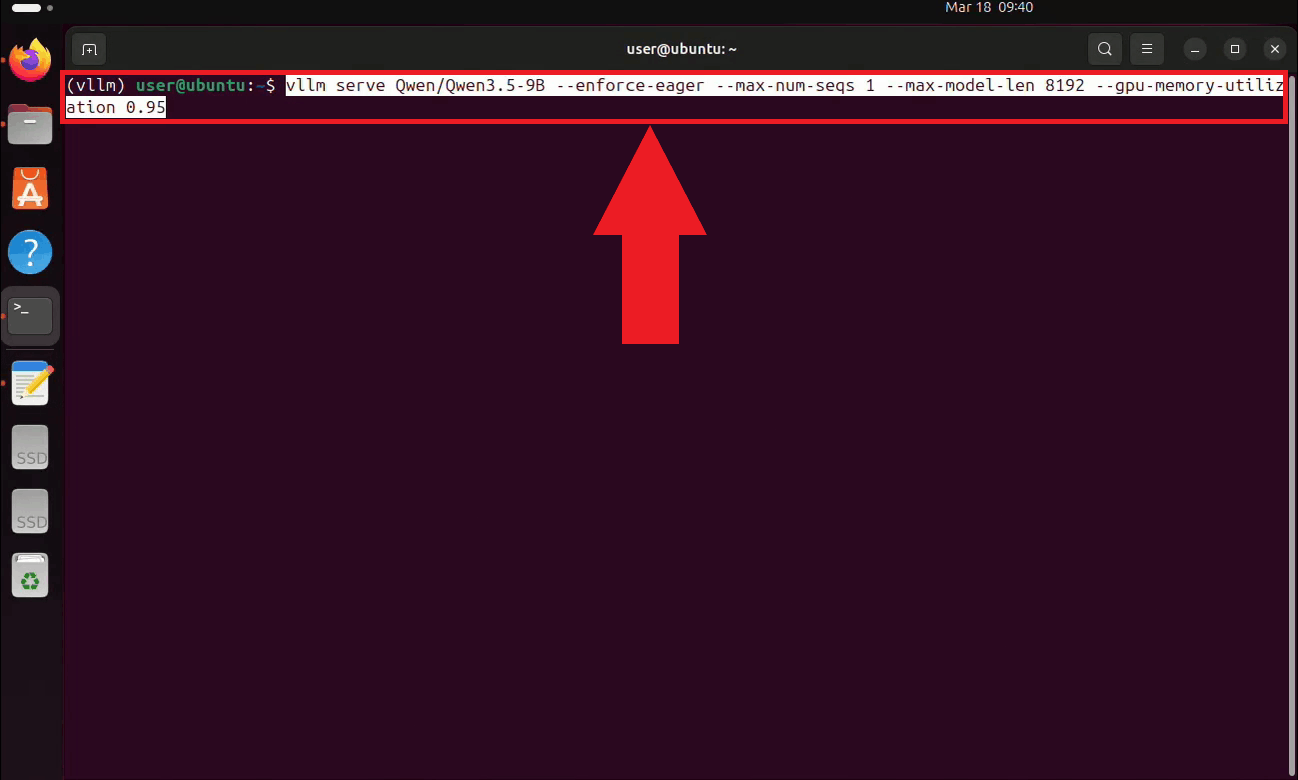

Step 3 - Start the vLLM server

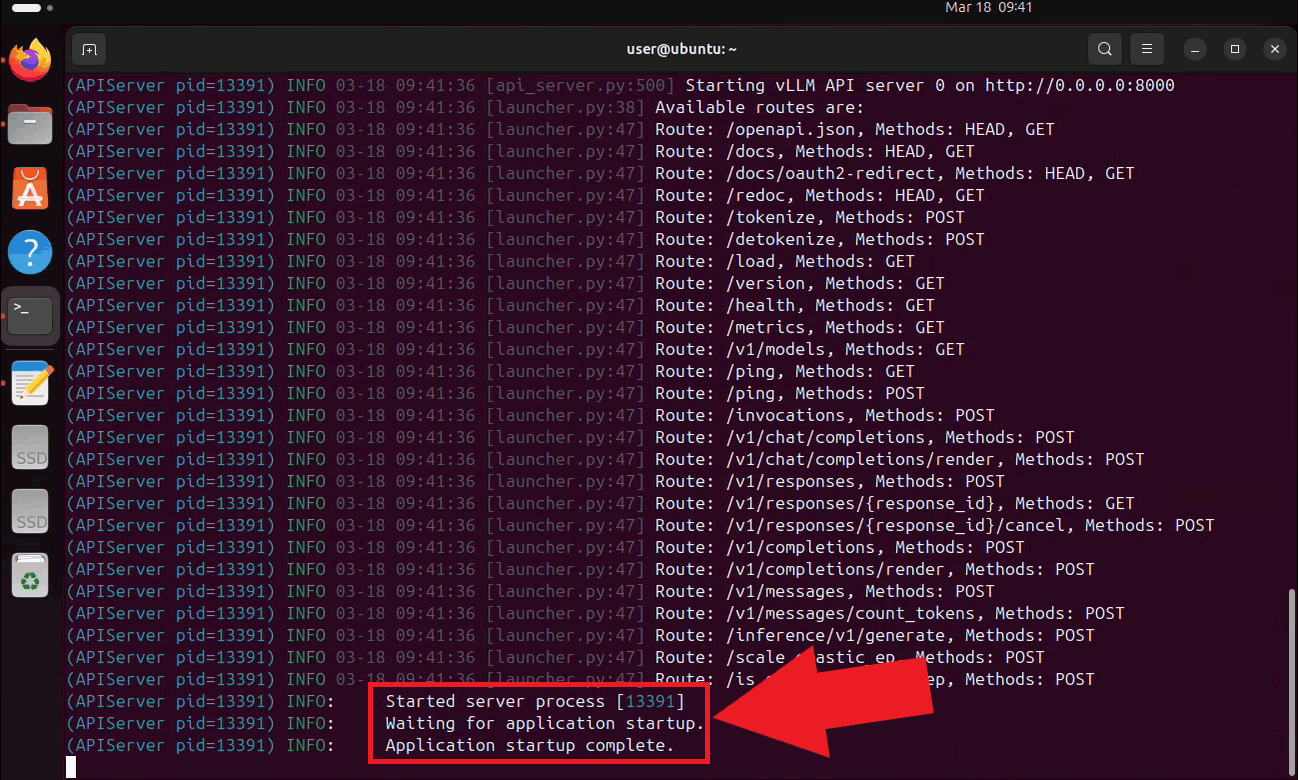

Start the vLLM server with the Qwen 3.5 9B model. On the first run, vLLM will automatically download the model from Hugging Face, which may take several minutes depending on your connection speed. The server is configured to use eager execution, limit concurrent sequences to 1, cap the context length at 8192 tokens, and utilize 95% of available GPU memory (Figure 4).

vllm serve Qwen/Qwen3.5-9B \ --enforce-eager \ --max-num-seqs 1 \ --max-model-len 8192 \ --gpu-memory-utilization 0.95

The vLLM server is now running and listening for requests on port 8000. Keep this

terminal open for the duration of your session. The endpoint is accessible to other

machines on your local network at http://{your-ubuntu-ip}:8000/v1 (Figure 5).

Step 4 - Connect vLLM to Ozeki Voice Keyboard

The following video shows how to connect the Ubuntu vLLM server to Ozeki Voice Keyboard on Windows and verify that the AI assistant is working correctly. The video covers locating the tray icon, enabling HTTP logging, configuring the LLM settings, and confirming the connection through the log viewer.

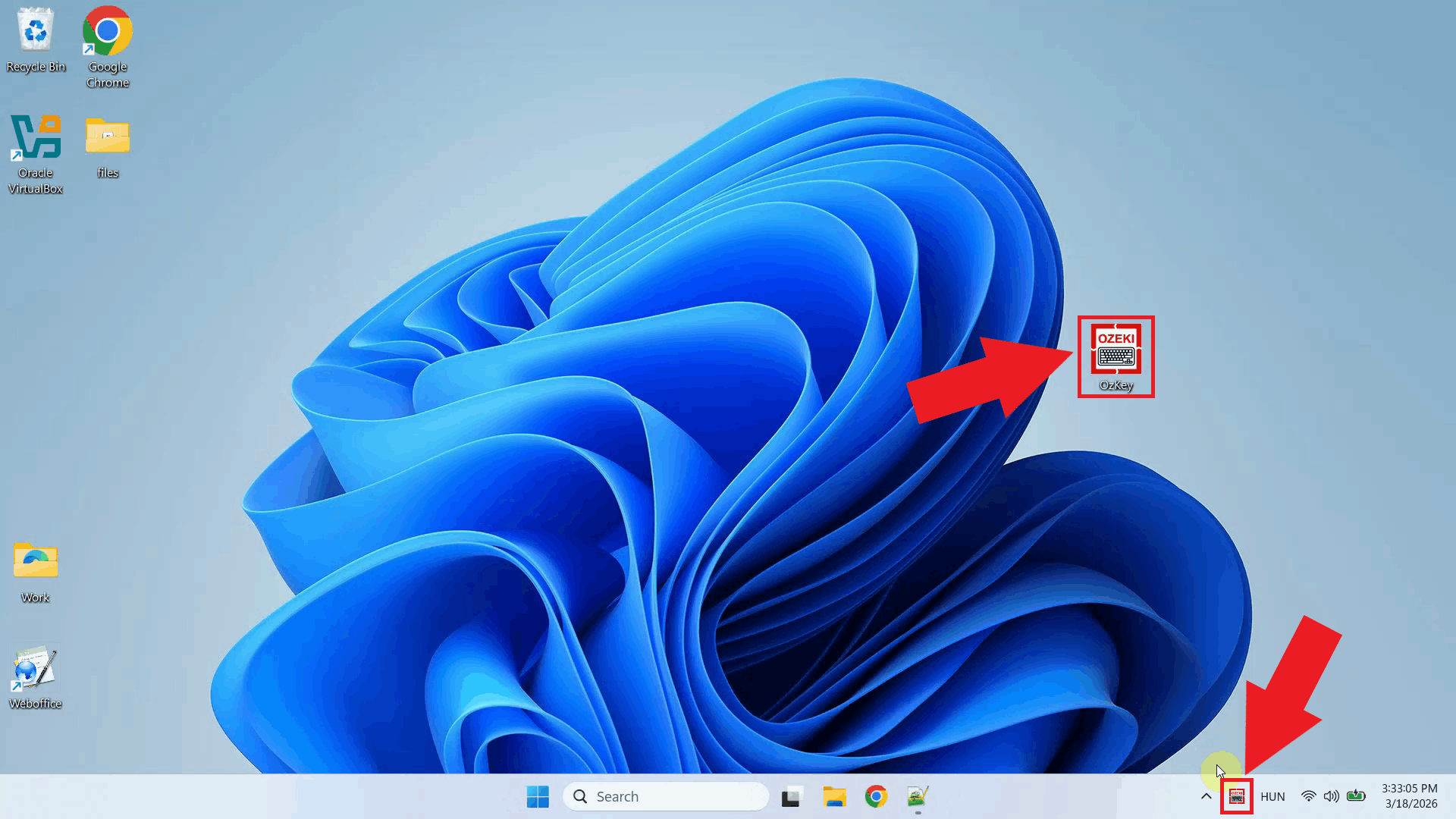

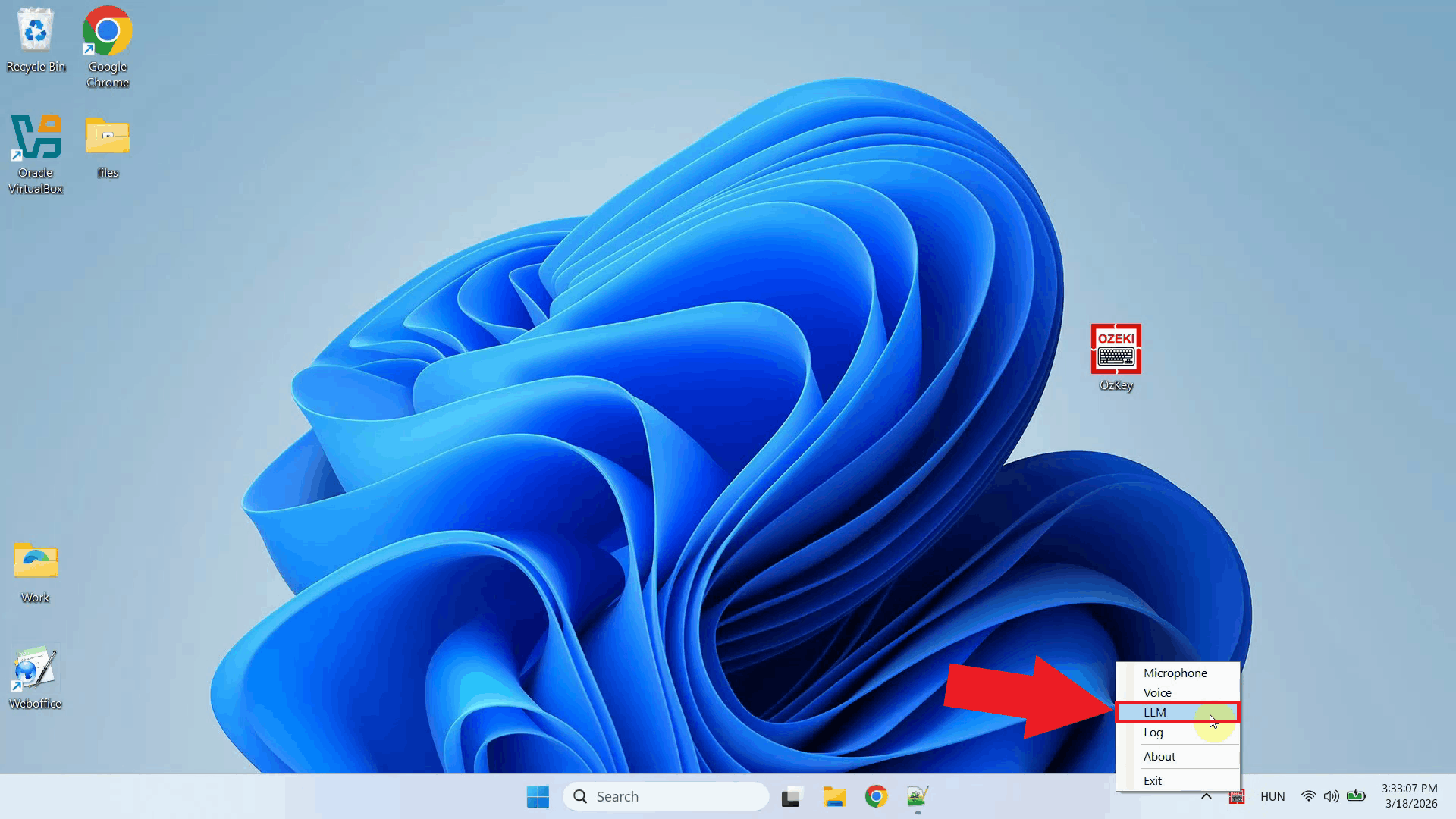

On your Windows machine, open Ozeki Voice Keyboard and locate its icon in the system tray in the bottom right corner of your taskbar (Figure 6).

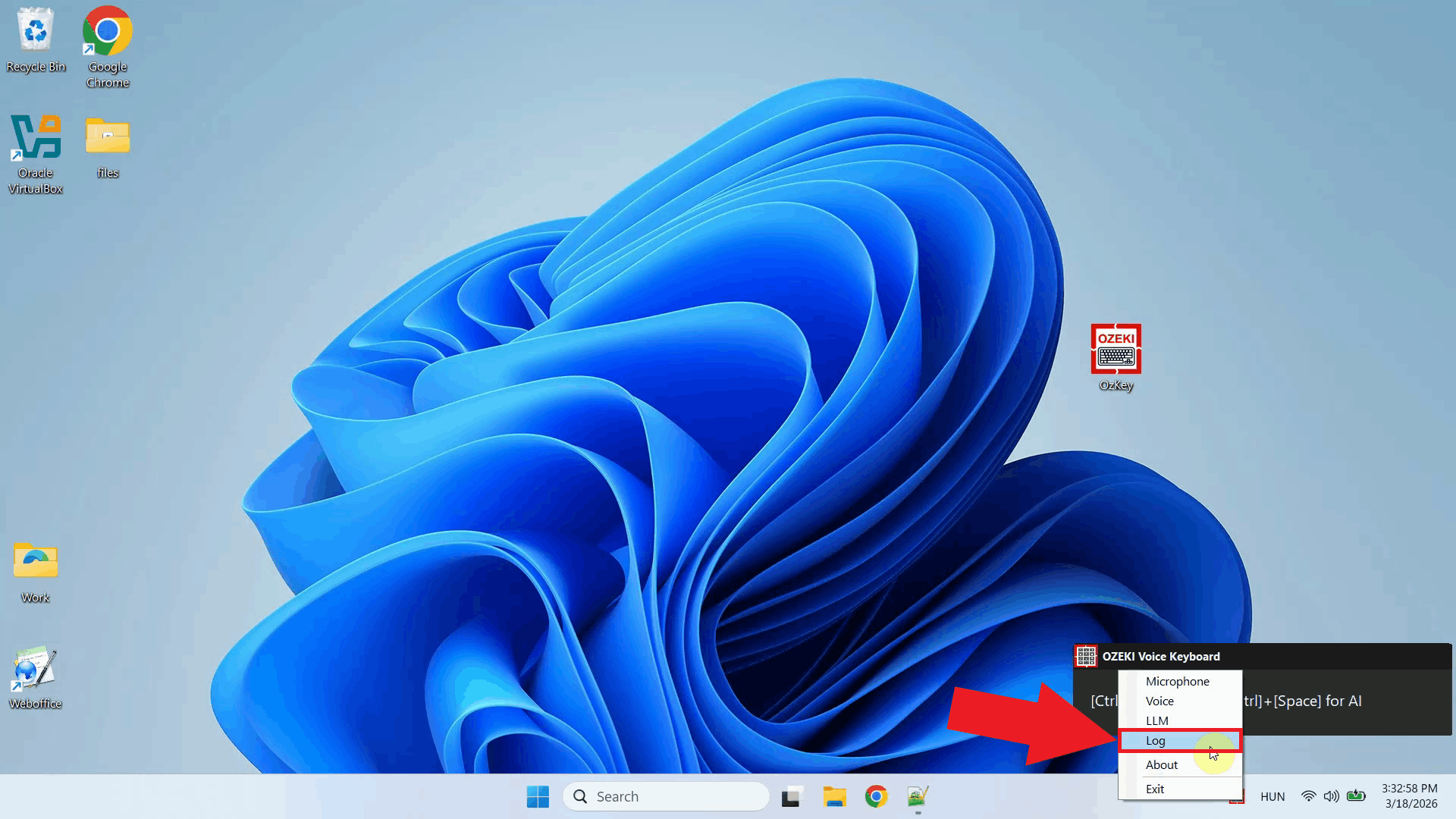

Before configuring the LLM settings, enable HTTP logging so you can verify that requests are reaching the Ubuntu server. Right-click the tray icon and navigate to Logs from the context menu (Figure 7).

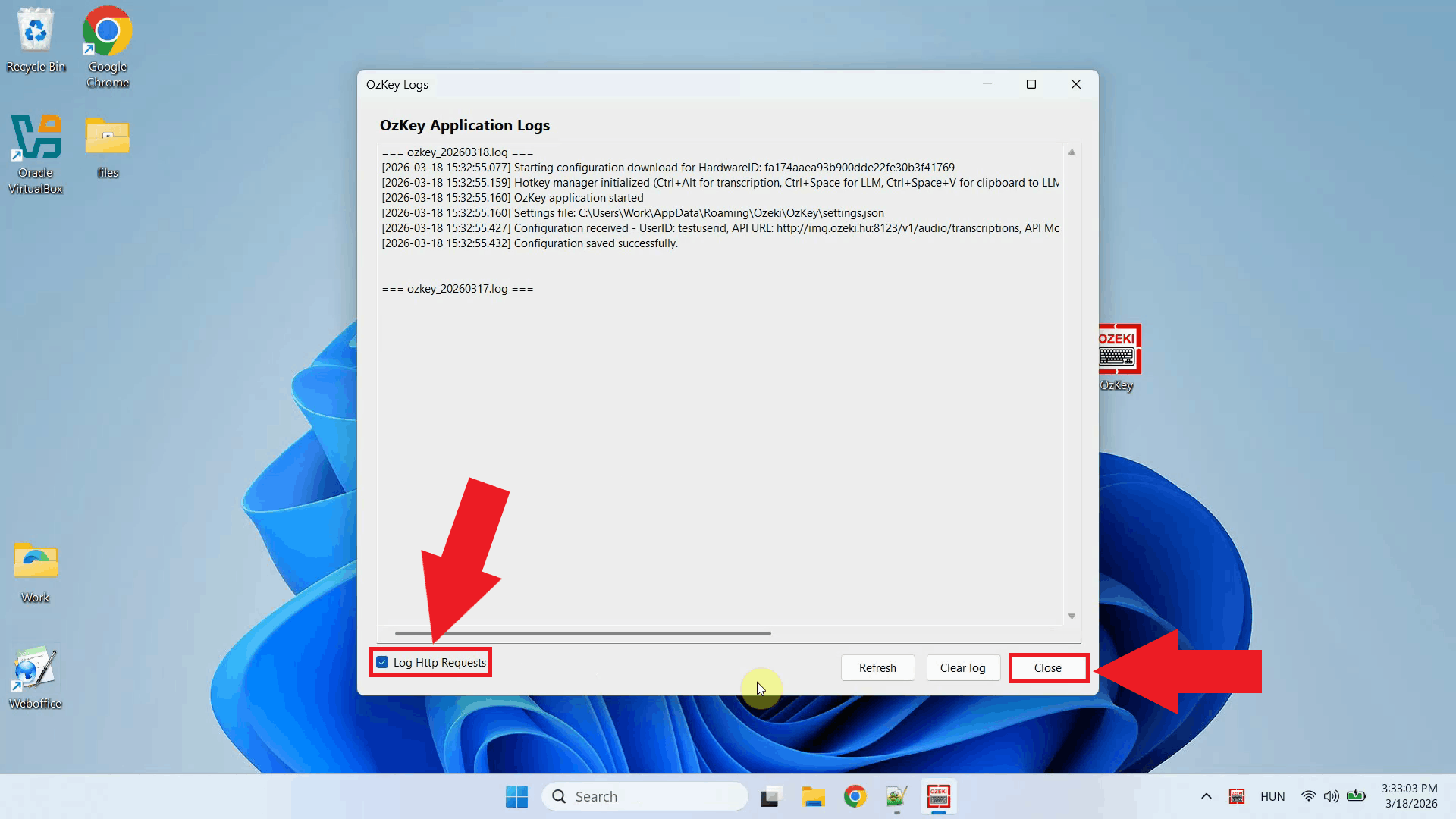

Enable HTTP logging and close the window. Outgoing requests to the vLLM server will now be recorded and visible in the log viewer (Figure 8).

Right-click the tray icon again and open the LLM settings from the context menu (Figure 9).

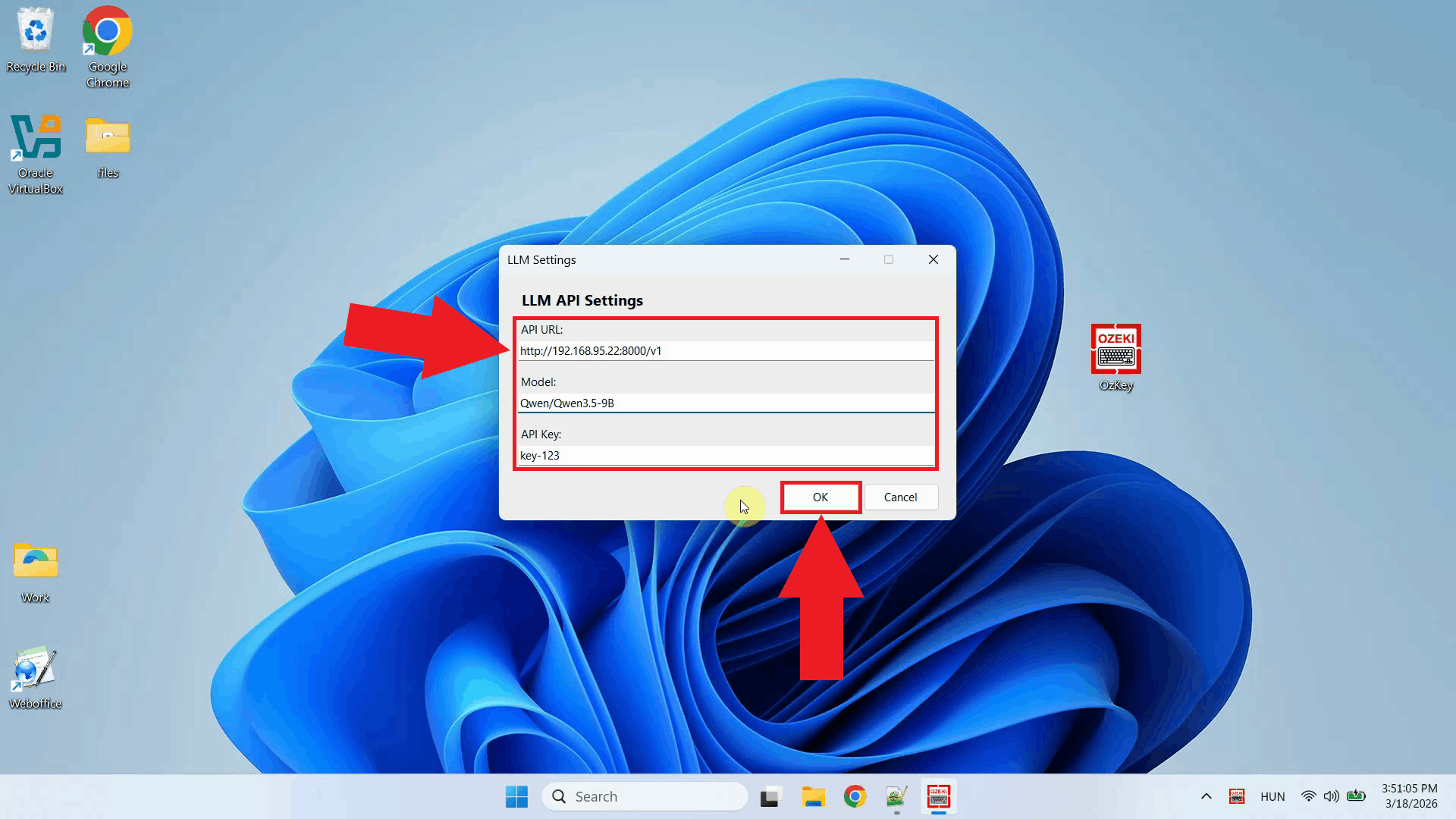

Enter the API URL of the Ubuntu machine and specify the model name. You can leave the API key field empty since vLLM does not require authentication by default. Click OK to save the settings (Figure 10).

http://{ubuntu-machine-ip}:8000/v1

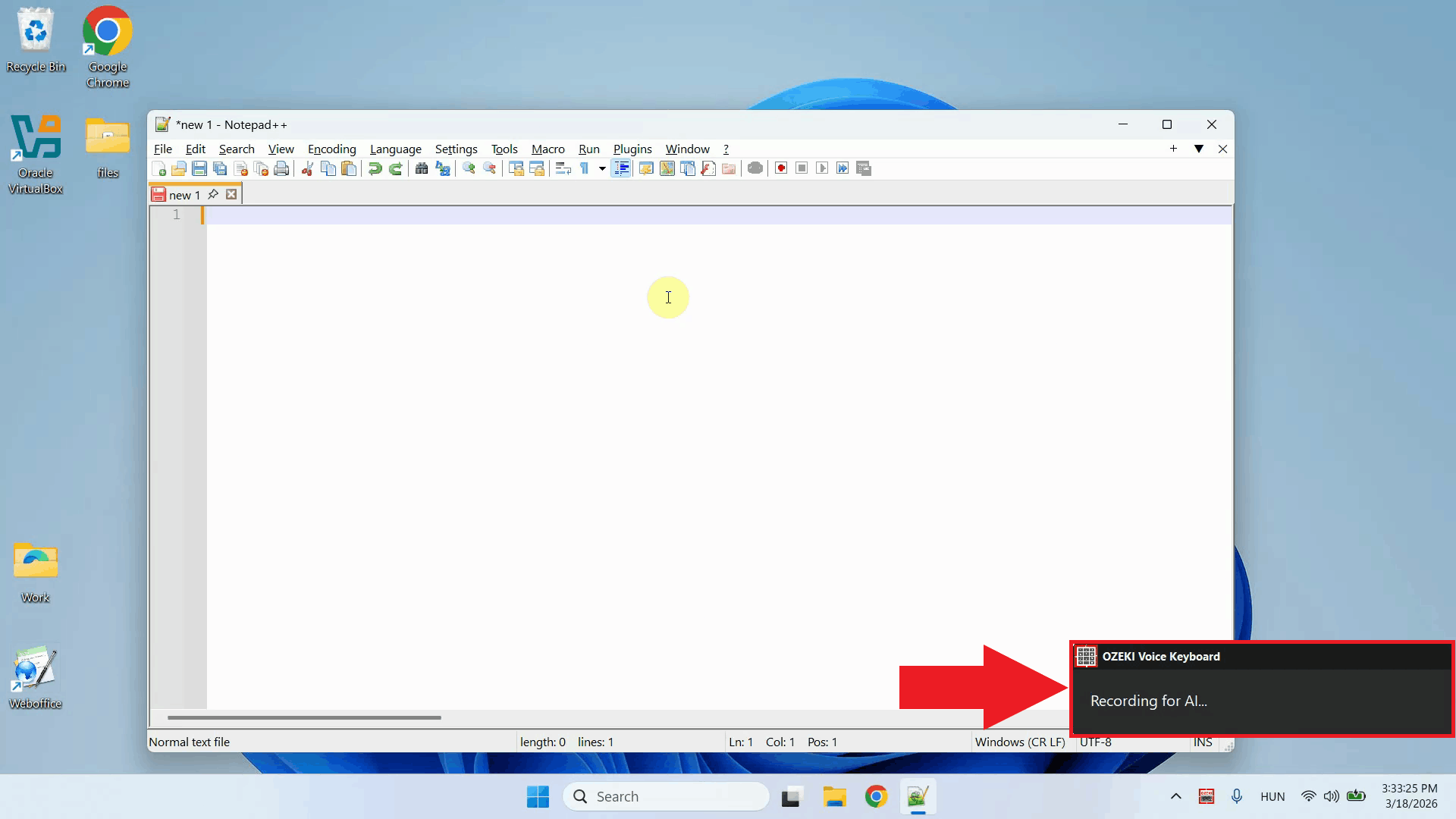

To test the AI assistant, place your cursor in any input field, then press and hold Ctrl + Space and speak your question into the microphone. Once you release the keys, the recording is transcribed and the resulting text is forwarded to the vLLM server on the Ubuntu machine as a prompt (Figure 11).

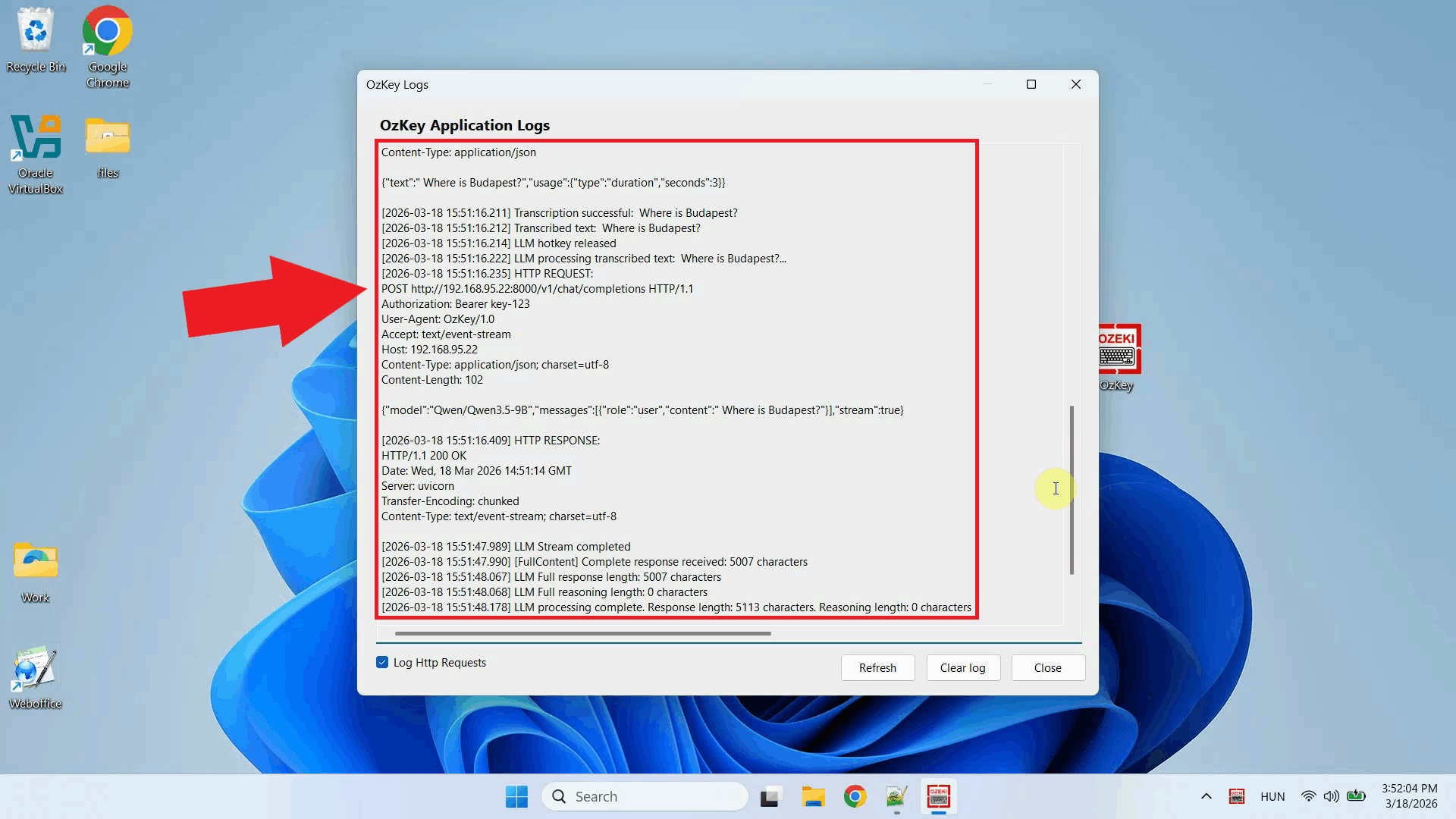

Open the Logs window to verify the request. You should see an HTTP request to the

vLLM server's /v1/chat/completions endpoint on the Ubuntu machine,

confirming that Ozeki Voice Keyboard is successfully communicating with the remote

LLM backend (Figure 12).

Conclusion

You have successfully configured a vLLM server on Ubuntu and connected it to Ozeki Voice Keyboard on Windows. The AI assistant will now use your Ubuntu machine's GPU to generate responses, giving you a high-performance, fully local LLM backend that operates entirely within your own network without relying on any external cloud service.