How to set up Whisper Speech Detector on Ubuntu Linux

This guide demonstrates how to set up a Whisper speech recognition server on an Ubuntu machine and connect it to Ozeki Voice Keyboard running on Windows. You will learn how to install the required dependencies, start the Whisper server using vLLM, and configure Ozeki Voice Keyboard to send audio to the Ubuntu machine for transcription over the network.

What is Whisper?

Whisper is an open-source speech recognition model developed by OpenAI. In this setup, it is served on an Ubuntu machine using vLLM, which exposes an OpenAI-compatible endpoint on the network. Ozeki Voice Keyboard, running on a separate Windows machine, sends recorded audio to this endpoint and receives the transcribed text in response.

System architecture

The diagram below illustrates how the Windows machine running Ozeki Voice Keyboard communicates with the Ubuntu machine hosting the Whisper server.

Steps to follow

Before proceeding, make sure Anaconda is installed on your

Ubuntu machine. The vllm package will be installed via pip during the setup process.

- Create and activate the Conda environment

- Install vLLM

- Start the Whisper server

- Connect Whisper to Ozeki Voice Keyboard

Quick reference commands

# Create a Python 3.12 Conda environment conda create -n whisper python=3.12 # Activate the environment conda activate whisper # Install vLLM with audio support pip install vllm vllm[audio] # Start the Whisper server vllm serve openai/whisper-small # API endpoint (replace with your Ubuntu machine's IP) http://ubuntu.machine/v1/audio/transcriptions

How to set up Whisper on Ubuntu video

The following video shows how to set up and run the Whisper speech recognition server on Ubuntu step-by-step. The video covers creating the Conda environment, installing vLLM, and starting the server.

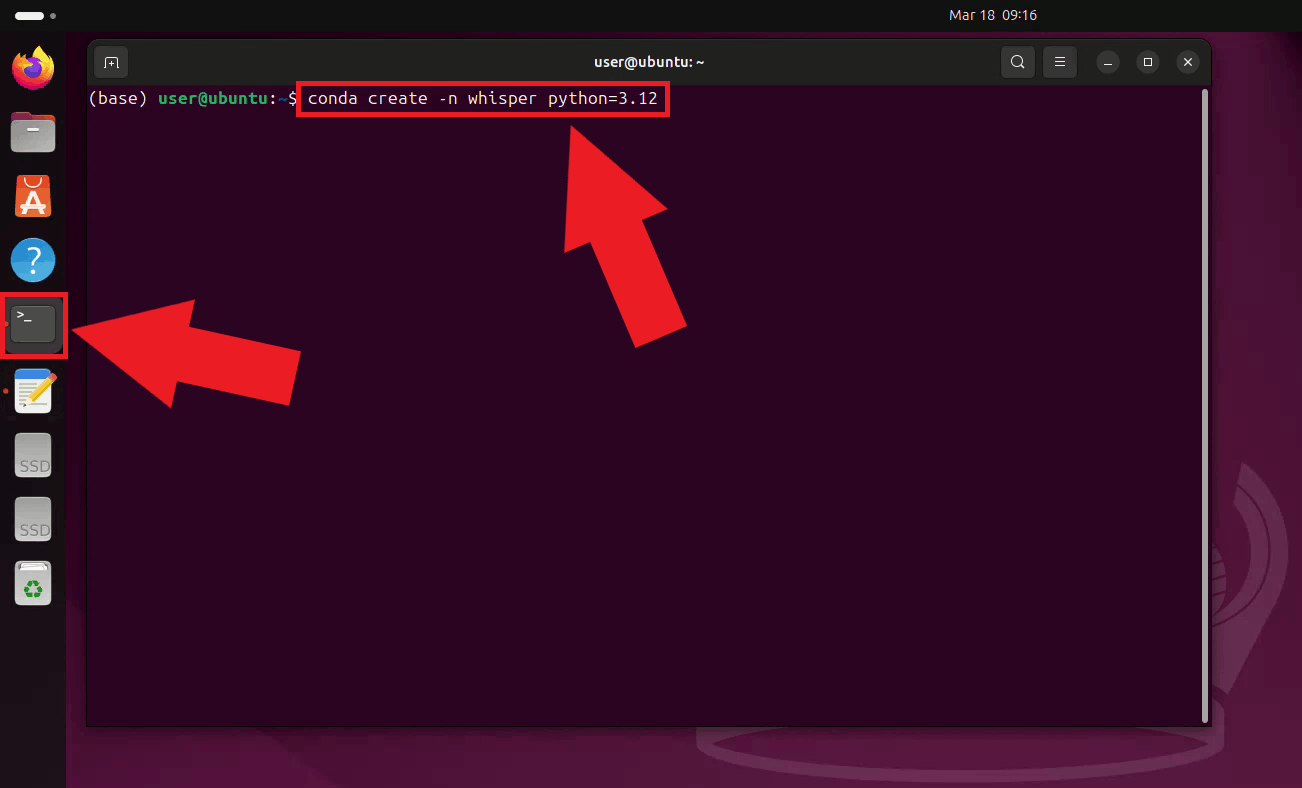

Step 1 - Create and activate the Conda environment

Open a terminal on your Ubuntu machine and create a dedicated Conda environment with Python 3.12 for the Whisper server. Using a separate environment keeps its dependencies isolated from other Python projects on your system (Figure 1).

conda create -n whisper python=3.12

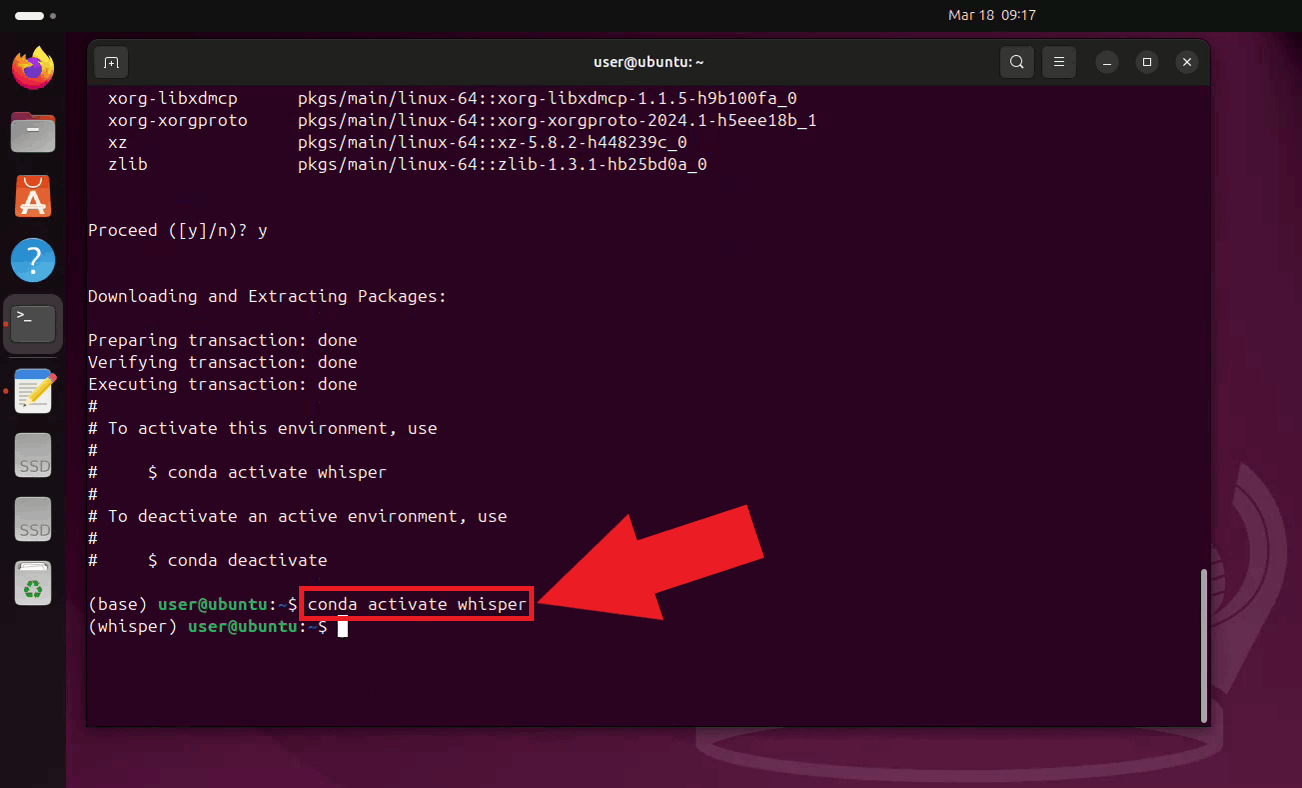

Activate the newly created environment. Your terminal prompt will update to show the active environment name (Figure 2).

conda activate whisper

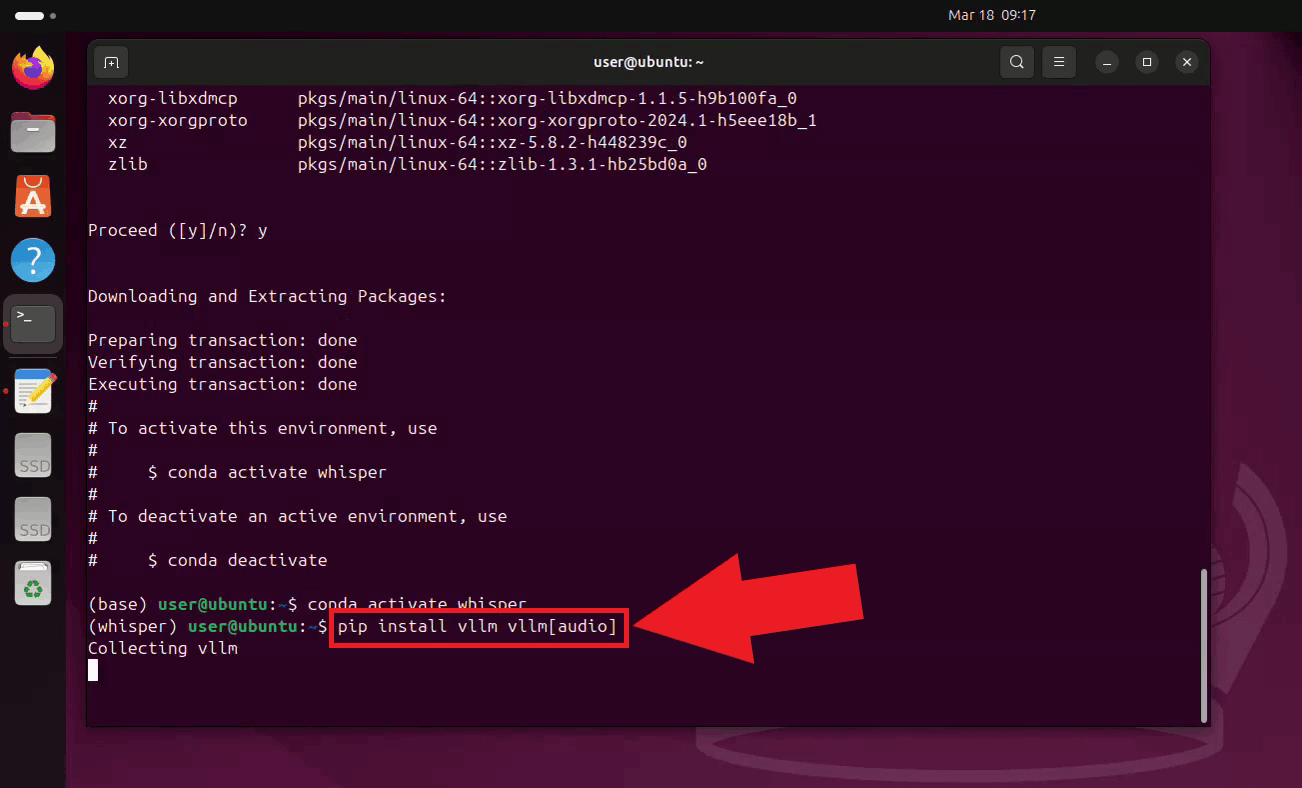

Step 2 - Install vLLM

With the environment active, install vLLM along with its audio support extra using pip.

The vllm[audio] extra includes all dependencies needed to serve Whisper as

an audio transcription endpoint (Figure 3).

pip install vllm vllm[audio]

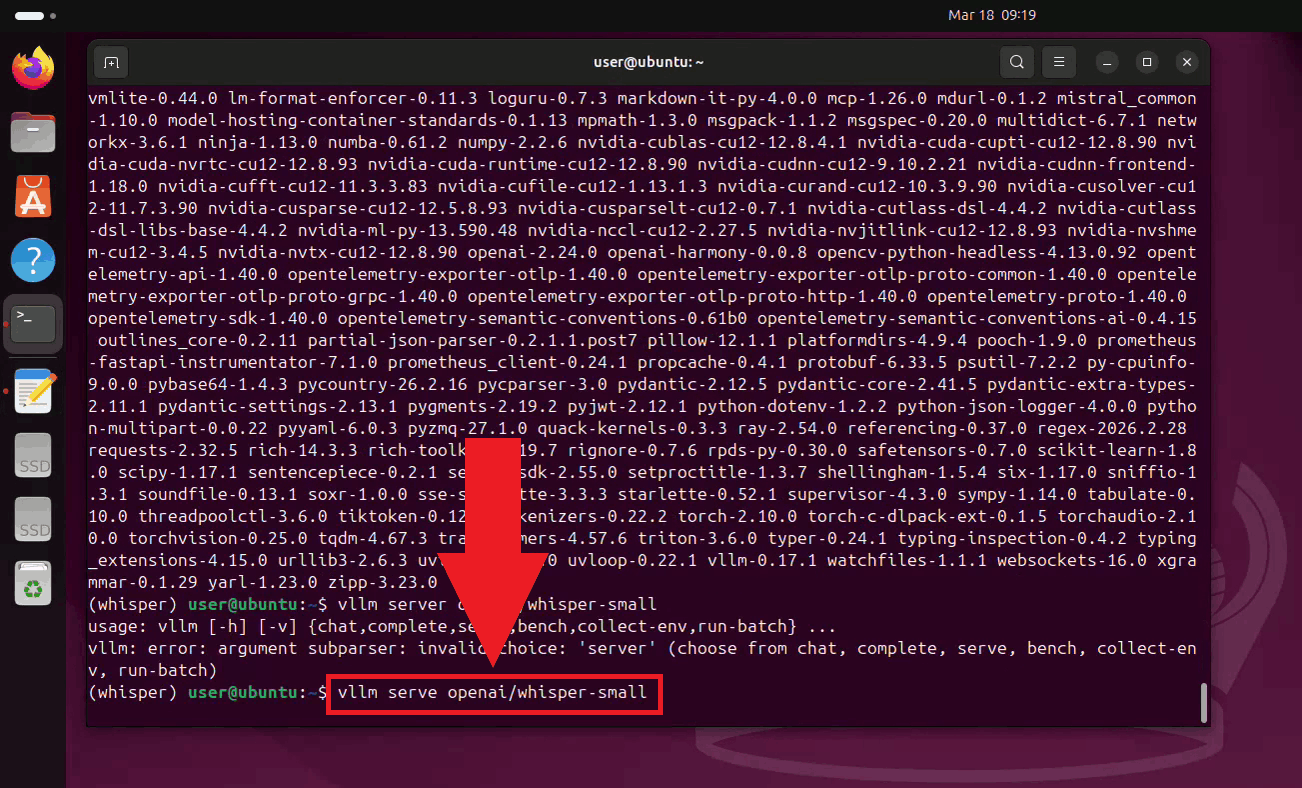

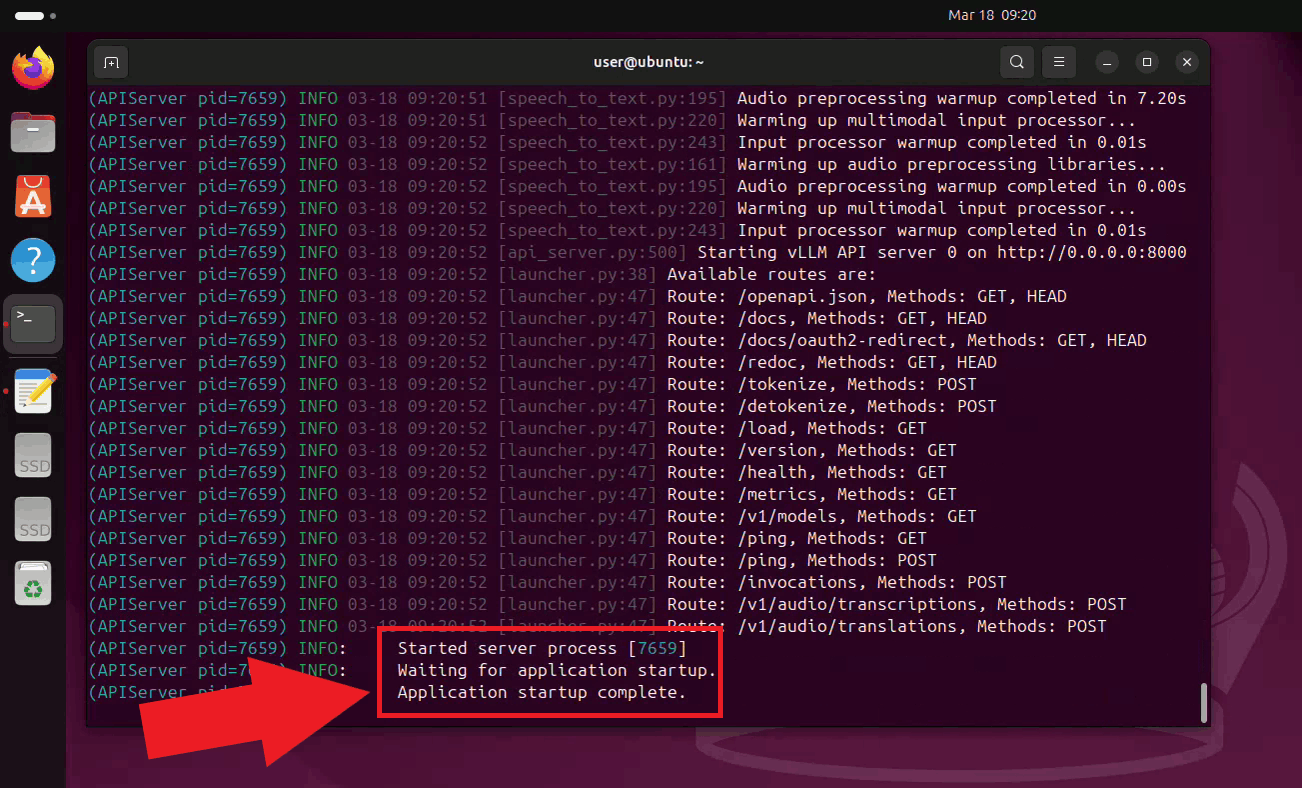

Step 3 - Start the Whisper server

Start the Whisper server by running the vLLM serve command with the small model (You can also use more powerful models depending on your hardware configuration). The server will download the model on the first run, which may take a few minutes depending on your connection speed (Figure 4).

vllm serve openai/whisper-small

Once the server has started, it will begin listening for transcription requests on

port 8000. Keep this terminal open for the duration of your session. The endpoint

is accessible to other machines on your network at

http://{your-ip-address}/v1/audio/transcriptions (Figure 5).

Step 4 - Connect Whisper to Ozeki Voice Keyboard

The following video shows how to connect the Ubuntu Whisper server to Ozeki Voice Keyboard on Windows and verify that transcription is working correctly. The video covers locating the tray icon, enabling HTTP logging, configuring the Voice settings, and confirming the connection through the log viewer.

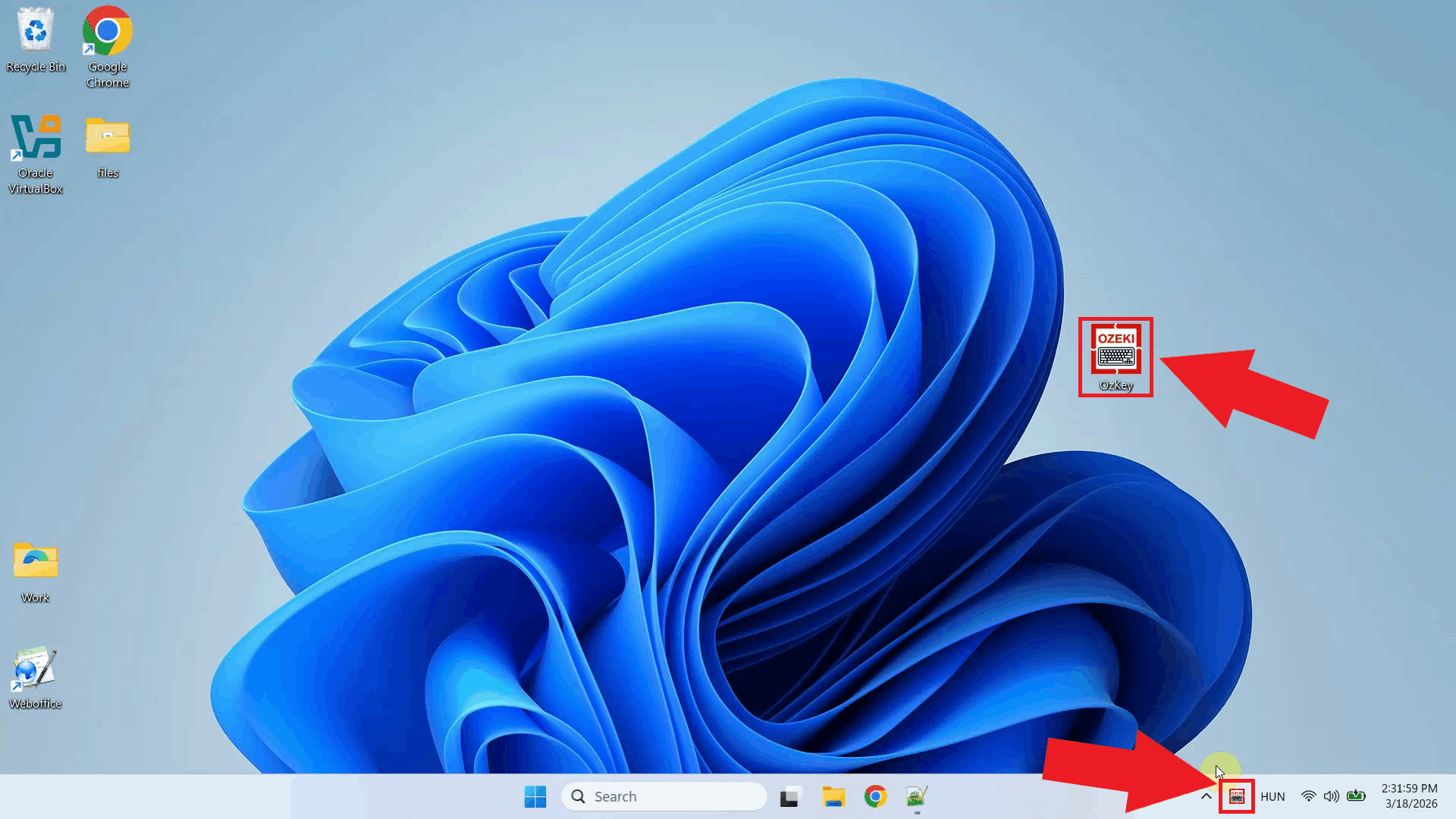

On your Windows machine, open Ozeki Voice Keyboard and locate its icon in the system tray in the bottom right corner of your taskbar (Figure 6).

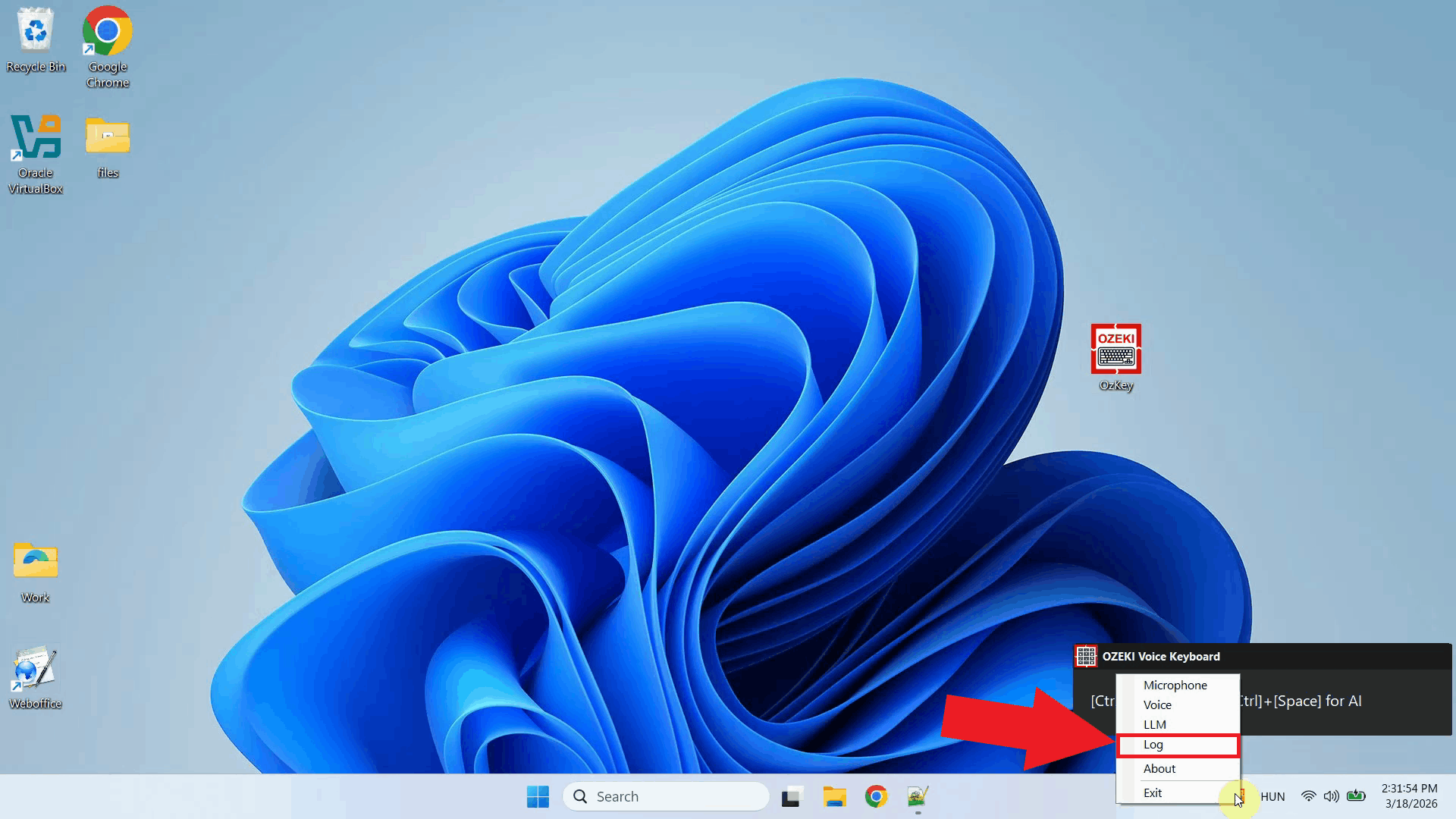

Before configuring the Voice settings, enable HTTP logging so you can verify that requests are reaching the Ubuntu Whisper server. Right-click the tray icon and navigate to Logs from the context menu (Figure 7).

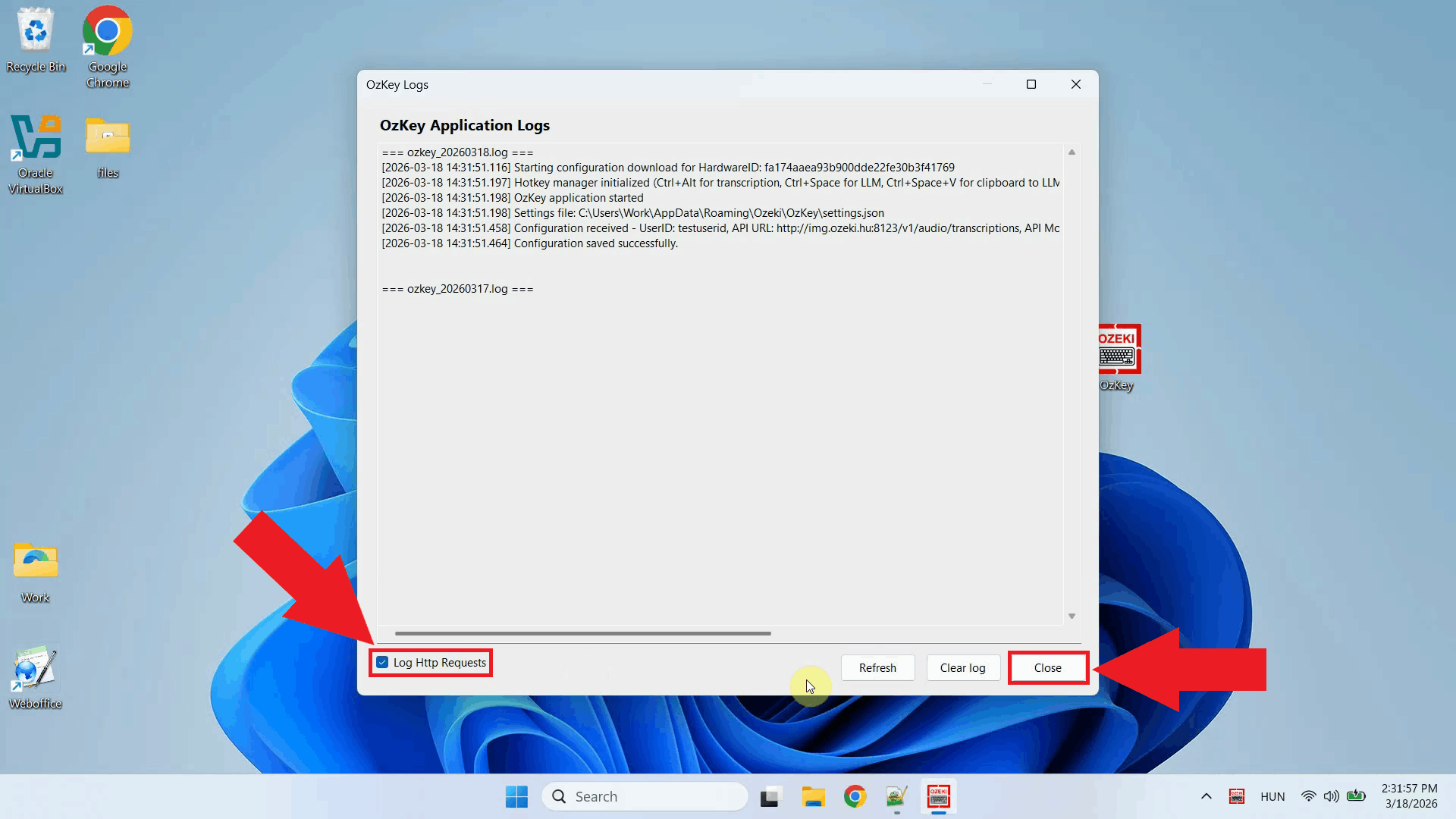

In the Logs window, enable HTTP logging and close the window. This will allow you to monitor outgoing requests to the Whisper server after configuration (Figure 8).

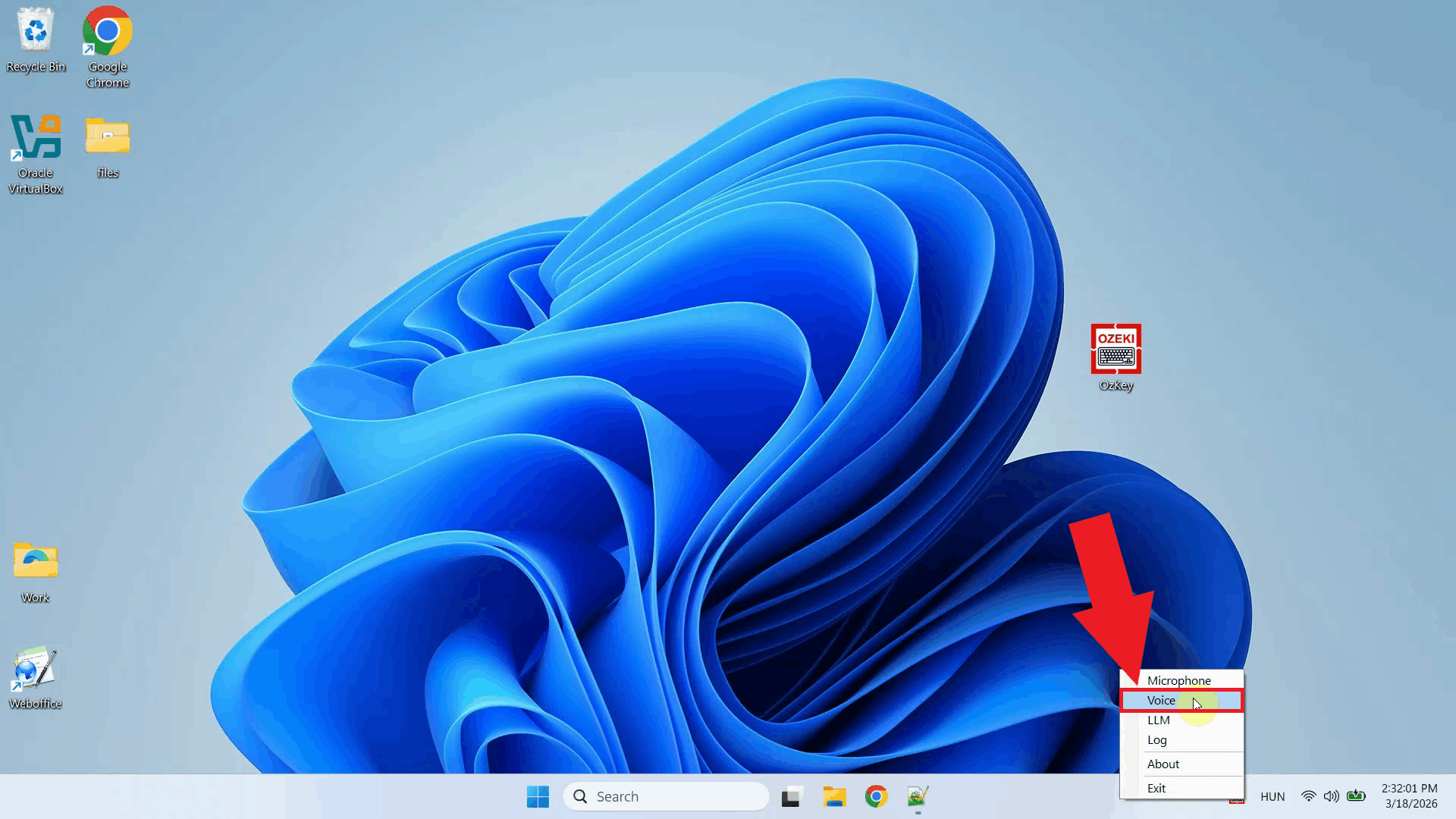

Right-click the tray icon again and open the Voice settings from the context menu (Figure 9).

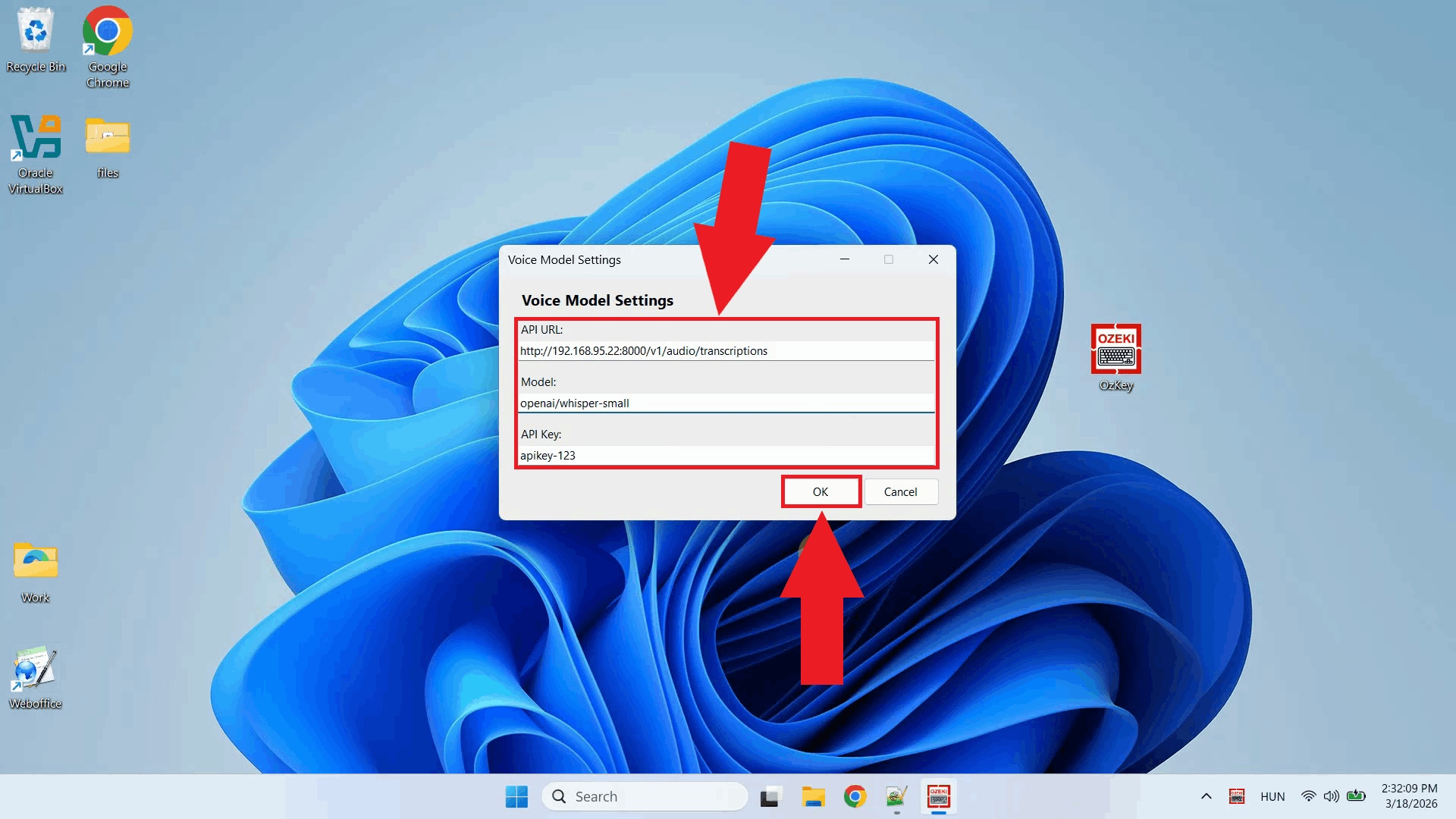

Enter the API URL of your Ubuntu machine and specify the model ID. You can leave the API key field empty since the local server does not require authentication. Click OK to save the settings (Figure 10).

http://{ubuntu-machine-ip}:8000/v1/audio/transcriptions

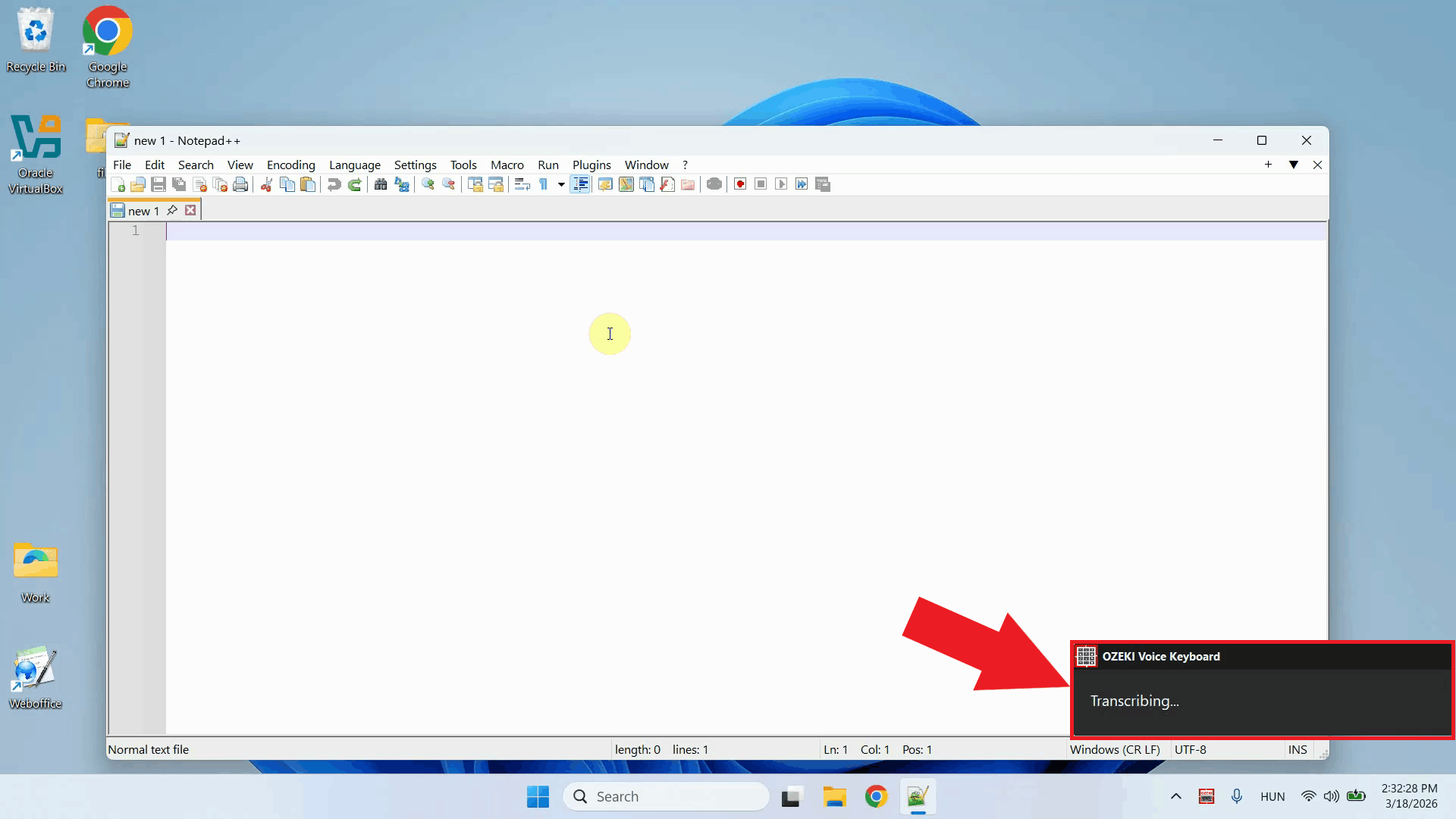

Test the setup by placing your cursor in any input field on the Windows machine and using the voice recording hotkey to dictate some text. The audio will be sent over to the Whisper server on the Ubuntu machine, and the transcription will be pasted into the active field (Figure 11).

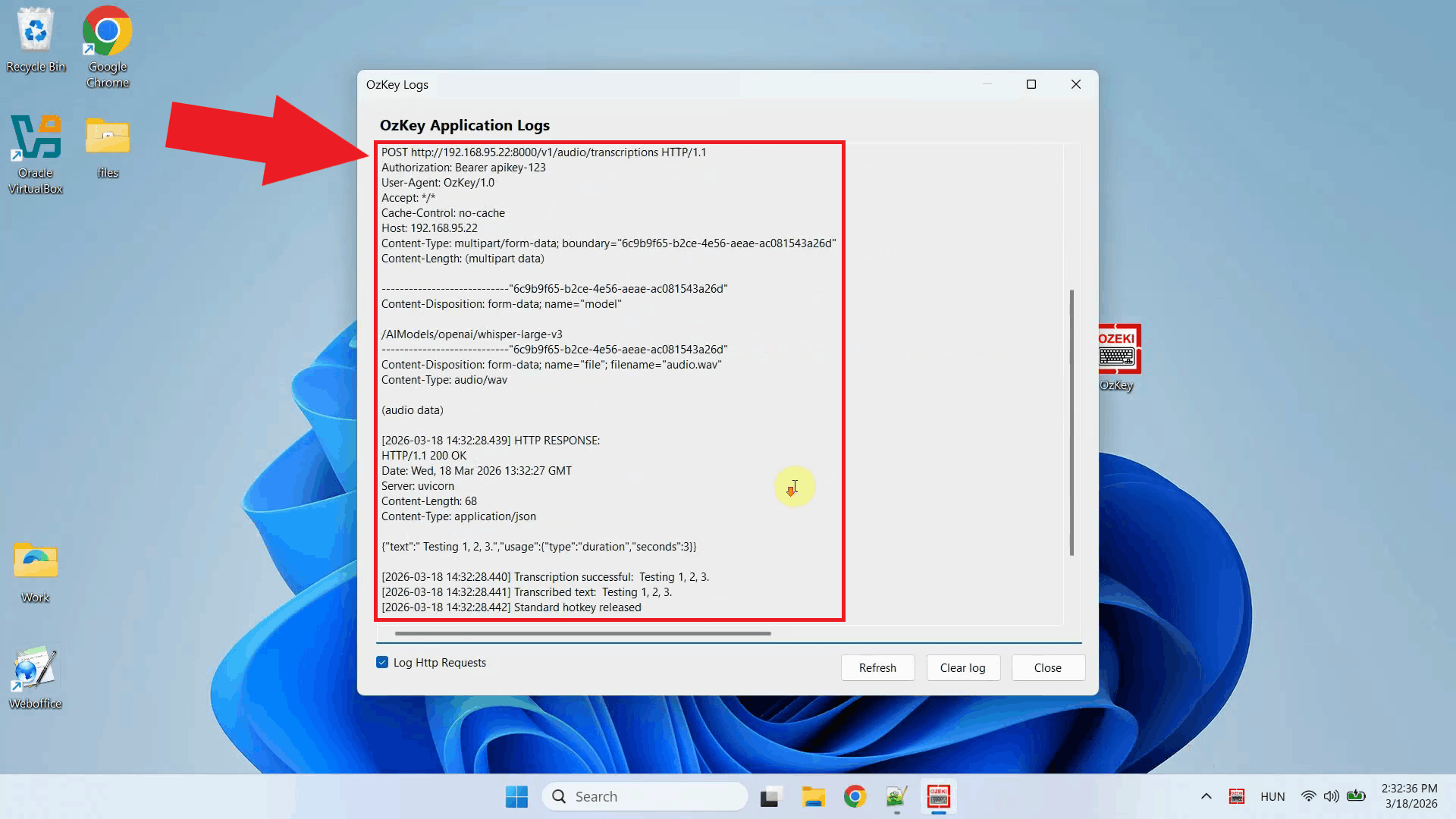

Open the Logs window to verify the request. You should see an HTTP request to the

Ubuntu machine's /v1/audio/transcriptions endpoint, confirming that

Ozeki Voice Keyboard is successfully communicating with the remote Whisper server (Figure 12).

To sum it up

You have successfully set up a Whisper speech recognition server on Ubuntu and connected it to Ozeki Voice Keyboard on Windows. This setup allows you to offload speech processing to a dedicated Linux machine on your network, keeping the Windows machine lightweight while still benefiting from fast and accurate voice transcription.